I wasn't the first to write about this principle, and I won't be the last. You'll find many versions across the internet, each with their own variation. At the core, they're all the same. Here's mine.

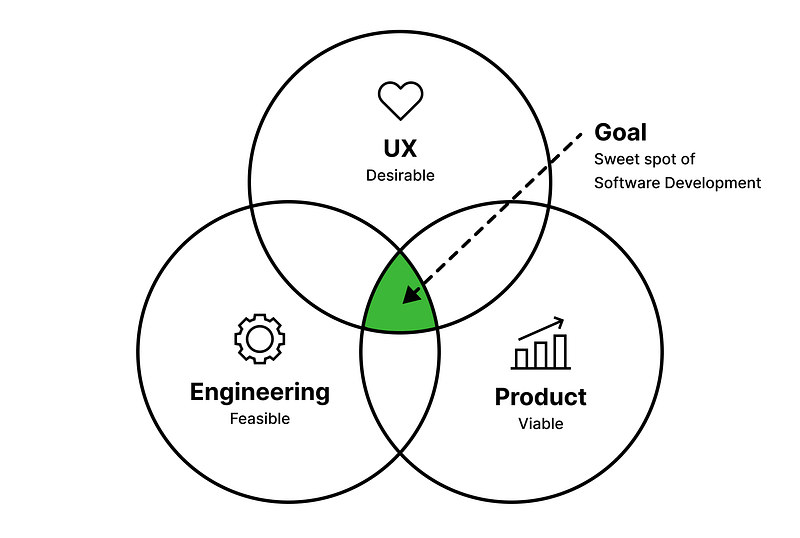

The best products emerge when three distinct perspectives collaborate. Some call it the product trio. Others call it a three-legged stool. The terminology varies; the structure doesn't.

AI has made this principle more essential, not less. I will get back to this in a bit.

The Product Triad

Each perspective is distinct and equally necessary:

Product speaks for the business. Does this make strategic sense? They validate market fit, competitive positioning, and commercial viability.

Engineering evaluates what's possible. Can we build this and keep it running? They validate technical feasibility, architecture, and scalability.

UX advocates for the user. Will people actually be able to use this? They validate whether the solution addresses real needs, and whether the experience is learnable, efficient, and adoptable.

These functions are not interchangeable. Product managers excel at articulating business goals but are not trained in what drives user behavior. Engineers understand technical trade-offs deeply but do not typically focus on cognitive load. UX professionals champion user needs but often lack visibility into market dynamics or infrastructure constraints. Even as AI and other emerging technologies blur the boundaries between these roles, each remains necessary to build great software.

The triad works because each perspective compensates for what the others naturally miss. Remove one, and you introduce risk into whatever you are building.

The Confidence Gap

Nobody sets out to build something users won't want. The failure mode is more subtle: premature alignment.

Irving Janis, the psychologist who studied groupthink, described how high-functioning teams can reach consensus too quickly, before fully exploring alternatives or surfacing dissenting information. Nielsen Norman Group found the same pattern in cross-functional teams: people tend to discuss what everyone already knows rather than surfacing insights that might challenge shared assumptions.

When Product and Engineering align without UX, the agreement feels complete. There's a clear path forward. The confidence is genuine.

But alignment on two dimensions while missing the third leaves you with a confident decision, and a blind spot.

I've watched this play out at every level:

- Strategic decisions about priorities, resources, and experiments, made without the people who understand how users will actually experience the outcomes.

- Roadmaps built around what's feasible to deliver, while whether users actually need any of it gets pushed to later validation, or assumed away entirely.

- Features shipped with inconsistent interaction patterns, multiple paths to the same action, each adding cognitive overhead as users learn the same system in multiple ways.

The pattern is consistent: confident decisions, made by people who agree, based on assumptions no one verified.

What It Actually Costs

Forrester found that companies who invest meaningfully in understanding their users see roughly 415% return over three years. Studies on development efficiency show that catching a problem in design costs about a tenth of what it costs to fix in development, and a hundredth of what it costs after release.

But the most significant costs don't show up in any study. They accumulate as organizational friction.

I watched an enterprise capability fragment across an entire product portfolio. The organization wanted to build a horizontal service, the kind that works across multiple products and creates real leverage. Instead, architectural decisions were made product by product, so each team implemented the mandate in isolation. Same capability, different interaction patterns, no shared infrastructure.

By the time the mismatch surfaced, roadmaps were set and teams were months into delivery. Hundreds of engineering hours went into building the same thing in multiple ways. The capability that was supposed to scale across the enterprise could not, because each product shipped a different, incompatible version that now carries ongoing integration and maintenance costs.

This is the kind of failure a cross-system, end-to-end lens helps prevent. UX is one of the functions trained to notice where experiences connect or fragment, and to make the case for shared patterns and governance early, alongside architecture and product, before divergence becomes expensive.

I have often joined teams later in the cycle and found the central risk quickly: we are building something users do not want. Product had a hypothesis. Engineering validated feasibility. What was missing was a demand check. Work can be technically sound and strategically aligned and still address a problem users do not have.

On one team, we paused execution three weeks into a roadmap that had been locked the previous year. We ran a week of discovery and learned that roughly half of the planned initiatives targeted problems users wouldn't prioritize. That week of validation prevented a year of investment in the wrong things.

This was not negligence. The organization was following a well-established planning process. The process simply lacked an explicit step to verify that the problems being solved were worth solving.

What Changes When UX Is Present

When UX is involved from the start, not brought in to refine solutions, but present when problems get framed, the conversation shifts.

Assumptions that would have stayed buried surface early, when they're cheap to address. Solutions get tested against how people actually think and work, not just whether the technology supports them or the business case closes.

I've seen roadmaps completely restructured after UX research revealed that what Product had prioritized wasn't what users cared about. I've sat in workshops where getting all three perspectives in the same room for the first time created a shared understanding that connected work happening in silos. I've watched design systems accelerate delivery, not because they made interfaces prettier, but because they encoded decisions about how users interact with patterns.

UX involvement during problem definition changes what gets built. Late involvement changes how it looks.

Three Questions Before You Move Forward

Before locking in a major product decision, or beginning work on a major feature, confirm you have coverage across all three perspectives:

1. Is the business perspective represented? Do you understand why this makes strategic sense, how it fits the market, how you'll measure success?

2. Is the technical perspective represented? Can you actually build this? What are the architectural implications? Will it scale?

3. Is the user perspective represented? Do you understand what problem this solves from the user's point of view, and have you validated that they need it, will adopt it, and can actually use it?

If any of these is missing, not from your organization, but from this specific conversation, you're not ready to move forward. You're ready to investigate further.

This doesn't slow delivery. It prevents the expensive rework that comes from building against unvalidated assumptions. Validation costs hours. Rework costs months.

What AI Actually Changes, and What It Doesn't

I promised I'd come back to this.

There's a version of this conversation that goes: roles are blurring, tools are getting smarter, and the rigid triad is a relic of a slower era. PMs are prototyping in Figma. Engineers are generating UI flows with a prompt. Designers are shipping code. If everyone can do everything, do you still need a structured framework for who owns what?

I understand the argument. From speaking to my peers in UX leadership, I've seen it play out at many organizations.

Here's what I've actually observed: AI hasn't collapsed the distinctions between these perspectives. It's collapsed the time it takes to act on them. A PM can generate a prototype, but it still reflects a PM's mental model of the user, not the user's mental model of the product. An engineer can produce a working UI in hours, but working and usable are different questions. Speed of execution doesn't validate the assumptions underneath.

AI accelerates whatever model you're already running. Strong cross-functional habits, shared problem framing, early validation, all three perspectives present before direction is locked: AI makes that significantly more powerful. Missing a perspective? AI accelerates that too. You'll build the wrong things faster, generate more artifacts pointed at unvalidated assumptions, and ship more confidently in the wrong direction.

The data tracks with this. 83% of organizations using AI in UX report accelerated innovation, and more than half are expanding their design teams rather than cutting them. AI is handling routine work: synthesizing research, generating variations, running accessibility checks. That's freeing designers to operate at a more strategic level. The organizations seeing the benefit aren't replacing the triad. They're making each perspective sharper.

What AI can't do is determine whether something is worth building. It can't tell you if the problem you're solving is one users actually have. It can't decide what's desirable, what's ethical, or what will create genuine value. Those are judgment calls, and they require the right human perspectives in the room at the right time.

The triad is not a headcount structure. It is a governance principle.

As AI compresses timelines and blurs individual roles, that principle matters more. Someone still has to represent the people using what we build and ask the hard UX question: will this actually work for them?

If nobody is there to ask it, AI just helps you get to the wrong answer faster.

The Risk Nobody Names

When UX operates as a downstream service, engaged after problems are scoped and solutions are determined, the function atrophies. Designers and researchers become execution resources. The strategic capability UX could provide never develops because it's never exercised.

And leadership doesn't know what they're missing. The people in the room agreed. Things shipped on time.

Products don't fail spectacularly under these conditions. They succeed just enough, hitting the metrics that were set, shipping on schedule, generating enough wins to declare victory, while leaving substantial value unrealized and accumulating technical debt that someone will eventually address.

That unrealized value is competitive disadvantage. The function most likely to surface it gets treated as overhead.

AI makes this worse. It accelerates execution in every direction. If the underlying decisions are sound, it's an enormous multiplier. If they're not, you'll build the wrong things faster than you ever thought possible.

The Verification Gap

Every product decision is a bet on three assumptions:

Will this work for the business? Can we build it? Will users adopt it?

Product pressure-tests the first. Engineering pressure-tests the second. UX makes the third explicit and testable.

You are placing the bet either way. The difference is whether you validate all three assumptions up front.

Most organizations have access to all three perspectives. The question is whether they are present when direction is set: when problems are framed and priorities are chosen, not only when solutions are refined.

Bring all three perspectives to the table early. Validate assumptions before investing in a build. That is how you gain complete confidence and avoid expensive surprises later.